Key Takeaways

- Inference performance and cost are increasingly driven by memory and data movement

- Agentic AI requires persistent, long‑lived context, which need mass-capacity hard drive storage

- Multi‑tier architectures (hard drives + GPU memory + NVMe SSD) help scale context without runaway cost

Agentic AI has emerged as the next operational frontier of value.

Organization leaders need AI systems that can plan, act and improve over time — agents that execute multi-step workflows and deliver critical business outcomes.

But as complexity and query volume increase, the limits of context retention those agents rely on are becoming hard to ignore.

Agents can become forgetful — not because the model isn’t capable, but because its usable, persistent context memory is limited.

The AI ecosystem has a name for this: the context wall.

The context wall is the point at which an agent runs out of working context and has to summarize, drop information, or repeatedly retrieve and re-check previously accessed facts. That slows inference, increases cost and often degrades quality. The result: inconsistent answers and lost threads.

The context wall quickly becomes a business issue. It shows up as:

- Higher compute bills (more rework, more retrieval cycles, more tokens)

- Slower responses (latency from recomputing or reloading context)

- Lower trust (inconsistent behavior across sessions)

- Limits on capability (agents can’t sustain long-horizon tasks)

Scaling the context wall is only in part about improving models. It’s mainly about how you store and serve context.

The joint solution for agentic AI

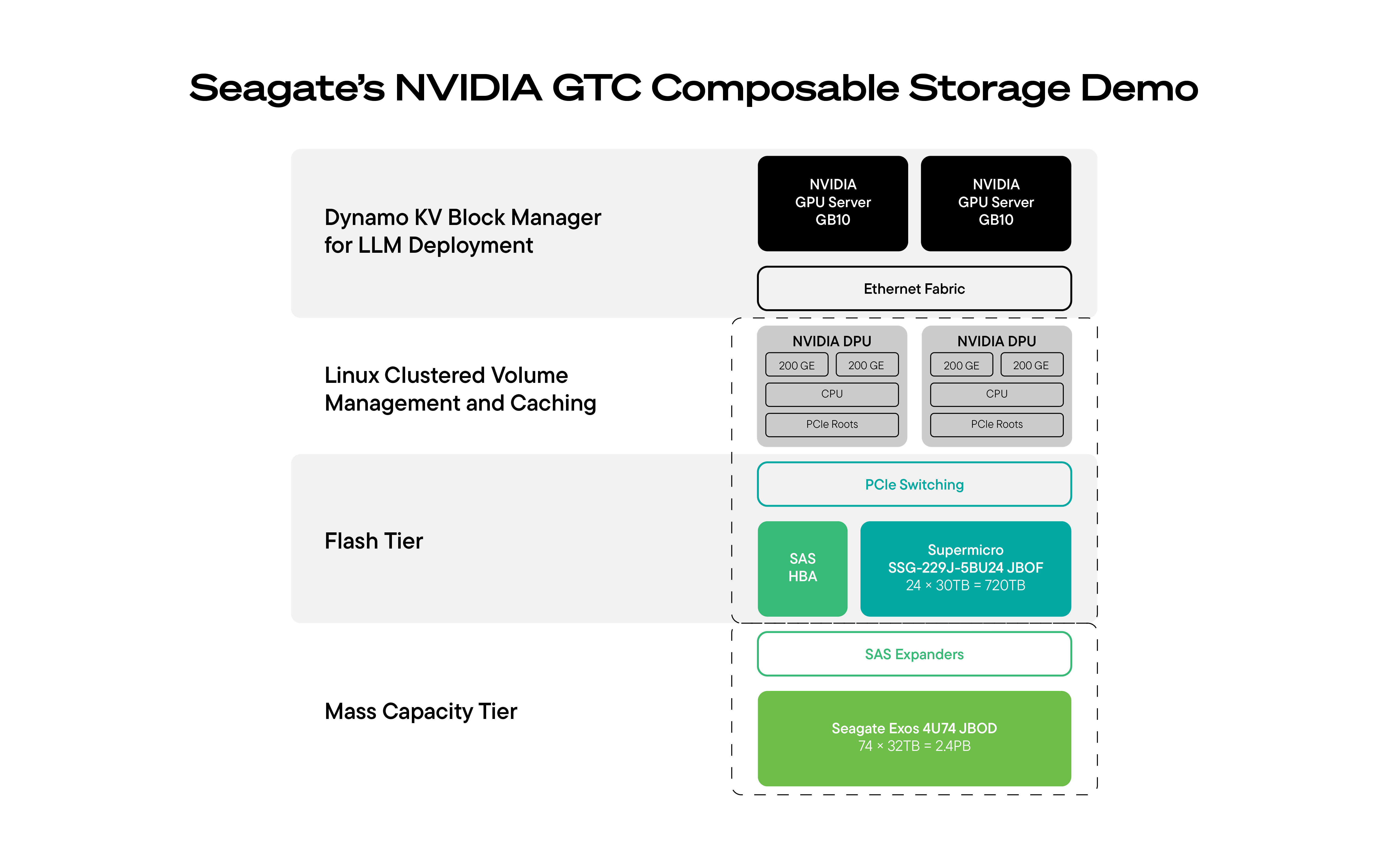

To address this challenge, Seagate and partners introduced at NVIDIA GTC a commercially available, production-ready multi-tier AI storage solution designed to extend context for AI workloads.

The solution demonstrated at GTC combined:

- NVIDIA DGX Spark GPU cluster compute node running inference at scale

- Supermicro JBOF as high-speed networked NVMe SSD cache tier to keep immediate context close to compute

- Seagate hard drive JBOD for scalable, high-capacity data storage tier to provide long-lived context affordably

- NVIDIA BlueField-3 or NVIDIA BlueField-4 DPUs to offload and accelerate data movement and caching between the storage and direct placement of data in the GPU memory

- DPU-orchestrated open-source components (NVIDIA Dynamo) to intelligently cache hard drive resident datasets through SSDs

This architecture matters not only because it extends context, but also because it reframes how organizations should think about AI inference economics. Once agent workloads move into production, memory and data movement become central to performance, cost and reliability — not just model quality.

“Combining Supermicro’s JBOF flash tier and Seagate’s hard drive tier can dramatically reduce inference costs while providing high performance," said Vik Malyala, President and Managing Director, EMEA, and SVP, Technology and AI, Supermicro. “This is especially important as agentic AI becomes widely adopted and the inference workloads grow exponentially.”

Turn memory into a competitive advantage

Here’s the shift that’s easy to miss: inference is becoming a memory problem as much as a compute problem. GPUs are powerful, but to be productive, they need the right data delivered at the right time, at the right speed and at the right cost.

Agents are hungry for more context storage. In addition to prompts, they need to keep track of:

- Long conversation and decision history

- Policies and procedures

- Product and troubleshooting knowledge

- Logs, tickets and telemetry

Trying to keep all that in the immediate-access tier (GPU memory or all-flash) is like insisting an entire company run off premium same-day shipping: great for a few packages; financially absurd at scale.

The winning approach relies on multi-tier, permanent storage architectures.

Why multi-tier storage is the practical answer

A smart AI stack separates short-term memory from long-term memory and uses each tier for what it does best:

- Real-time access tiers (GPU HBM memory, CPU DRAM, local and network NVMe SSDs): handle right now context — active tokens, hot embeddings and frequently accessed data

- Capacity tiers (built from hard drives): hold long-horizon context — large datasets, long-lived histories and extended agent memory

The business value comes from a simple principle: automate data placement over all tiers. You keep GPUs busy, costs under control and context deep.

How DPUs optimize the data plane

Historically, combining performance tiers and capacity tiers for AI has been messy. It often required complex proprietary file systems, heavy CPU overhead and fragile tuning — especially as data volumes ballooned.

That’s changing because of data processing units (DPUs).

DPUs can offload and accelerate data movement, so the system doesn’t burn host CPU cycles just to shuffle bytes. They enable high-speed networking and storage access patterns, and they can run standard Linux-based services for caching, tiering, resiliency and security. In short, DPUs help make multi-tier AI storage deployable and scalable.

That’s what makes a multi-tier design workable at production scale.

What the multi-tier architecture enables

The Seagate, Supermicro and NVIDIA architecture brings together the core components needed to extend AI context cost-effectively at scale: GPU compute for inference, hard drives for high-capacity long-lived context, NVMe SSDs for immediate access, and DPUs to coordinate data movement and caching across tiers.

That combination promotes the business outcomes customers care about most.

Deeper agentic context means better business value

What does this approach mean for customers?

1. Better agent stored memory — and better outcomes

Agents can access far more historical data than fits in GPU-adjacent storage. That supports longer-horizon reasoning, richer personalization and fewer failures caused by forgotten context.

2. Lower cost to scale context

Hard drives deliver dramatically lower cost per TB for long-term memory. That matters because datasets and agent histories grow continuously.

3. Efficiency as the next optimization frontier

Organizations track performance (tokens per second) as well as efficiency, including metrics such as power per token and sustained GPU utilization. Multi-tier designs help reduce wasted work (reloading, reprocessing, re-retrieving) and keep GPUs productive.

4. Alignment with where AI infrastructure is headed

DPU-driven data planes are becoming central to modern AI system design. This approach aligns with that direction: to build for scalable data delivery, not just raw compute.

Proof, not promises: The GTC demo and what comes next

At GTC, this architecture was demonstrated in a running system — with GPUs for inference, hard drives for massive, deep context, SSDs for immediate access and DPUs orchestrating efficient data movement and caching.

AI is still in an early stage of growth. It will continue to consume and generate massive volumes of data. Together, Seagate, Supermicro and NVIDIA are enabling that future with architectures that are more sustainable, more efficient and built for scale.

The organizations that scale agents successfully will be the ones that treat context as a strategic asset — and build infrastructure that can store and serve that context efficiently.

Talk to an expert about how Seagate can enable your organization to scale the agentic context wall.